Modern TVs Suck: A Case Against Motion Interpolation

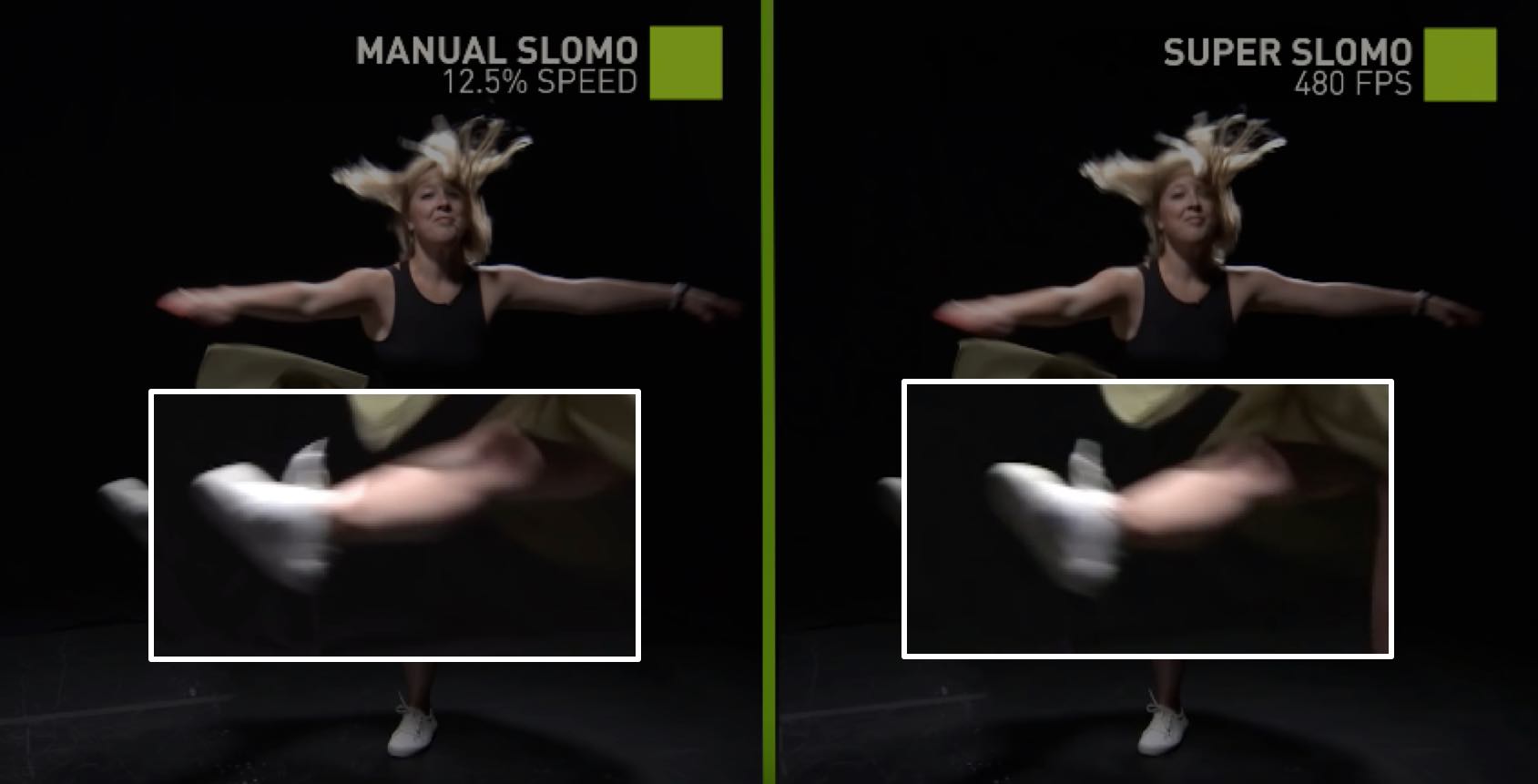

I was reading an article about how Nvidia used a machine learning algorithm (Convolutional Neural Networks) to generate video frames in between captured frames; in a nutshell, Nvidia created a slow motion effect by predicting the motion of the objects in the video and generating images of where the objects would be in between the recorded video frames. After the neural network interpolates the frames, they are played back at a normal frame rate. Nvidia's promo video shows an example of a 60fps video of a ballerina being turned into a Super! SloMo! 480fps video. However, the effect itself has long existed in video plugins such as Apple Motion's Optical Flow or RevisionFX's Twixtor. Both of these plugins predict the motion vectors of objects and interpolates the location of the object in between frames. Here's an example of Twixtor being used to slow down a backflip.

You can also observe the interpolation artifacts on the subject's legs when the motion of the backflip is too fast. The plugin failed to morph the legs from one frame to the next when the change in position was too large, and so the uploader sped up the video at that point.

When using motion interpolation plugins, many tutorials insist on making sure that the camera shutter speed is set as fast/high as possible, on the order of 1/1000s or more. For those who don't know what shutter speed is, it indicates how long the camera is opening the sensor to let light come in. We humans don't really have a shutter speed because our eyes continuously let in light, but we still see motion blur on fast moving objects because our retinas take time to collect and process light information. These pictures of a spinning pinwheel are examples of different shutter speeds on motion blur, with the leftmost image showing effects of a very fast shutter speed and the rightmost image showing a slow shutter speed.

Having a fast shutter speed means the objects are well defined and sharp, allowing motion interpolation to easily detect the outlines of the objects that are moving. However, most video is shot at slower shutter speeds, close to 1/50s, meaning you get motion blur on moving objects like the pinwheel in the center. This is actually a good thing. Slower shutter speeds create natural looking videos because the motion blur created is similar enough to how we perceive moving objects. If a high shutter speed is used, scenes look jerky and blocky, almost as if you were watching a fast slideshow (some people don't mind this effect). Traditionally, film is shot at a shutter speed twice that of the frame rate (30fps -> shutter speed of 1/60s). However, this is the exact opposite of the video settings needed for motion interpolation. For motion interpolation, videos need very high shutter speeds on the order of 1/1000s or more, and also have to be shot in well-lit and high contrast environments. Even then they might fail, as seen in the example above.

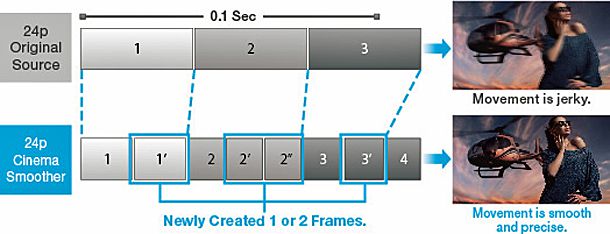

However, these days TVs indiscriminately apply motion interpolation to every video being displayed on the screen. All major TV manufacturers have now added motion interpolation as a feature. These TVs would take shows or films shot at lower frame rates such as 24fps and "motion-interpolate" them to frame rates like 72fps. It may be the worst "improvement" that has happened to TVs in all of TV history. This most likely occurred because of feature creep, a phenomenon where excessive features are added to consumer products resulting in bloat. This is exacerbated by the lack of differentiating features between TVs, other than the OLED/LCD display technologies. Do you personally care what brand of TV you buy if they're all LCDs?

You may be wondering, what's so bad about motion interpolation? Well, we can start by critiquing a misleading advertisement from Panasonic, released a few years ago:

There are three things that are wrong with this advertisement.

- The before-after shot is a bold-faced lie. If there is motion blur on the original image, there is going to be motion blur on the predicted image. You may be wondering if there are ways to "de-motion-blur" an image, but it is impossible without making the image look like crap. In fact most videographers would insist to reshoot the video. If you take a look at the ballerina from Nvidia's showcase video linked earlier, you can see high motion blur in the original 60fps video. Not surprisingly, the interpolated frames from the Super! SloMo! 480fps video continue to stay blurry! Is there even a point to slow motion if everything stays blurry? The following image of her leg shows the high motion blur that is impossible to get rid of:

-

Jerky vs "Smooth": this is half true. Yes, during scenes in a video where there are slow moving objects, the motion interpolation will work correctly and you will see more frames, providing a smoother viewing experience. However, as soon as there are quick movements, the interpolation fails, and the video experience suddenly switches from 72fps to 24fps. The switch between frame rates is quite annoying and even at a constant 72fps you have objects popping in when they enter the frame. The consistency of the video frame rate should definitely take precedence over motion interpolation of slow scenes...

-

"Precise": this technology fakes the motion of objects and makes up information that didn't exist. The motion generally looks unnatural. Even with Nvidia's ballerina, the edges of the dress seems to morph and fade from one location to another and contains video artifacts. This to me is the worst aspect of motion interpolation technology, but it may be the least obvious to consumers because the interpolated frames are displayed for such a small amount of time on the TV.

Furthermore, there are two non-technical reasons for my dislike of motion interpolation.

-

This first reason I admit is a bit weaker, but motion interpolation ruins the "film look", or ignores the fact that we associate 24fps video with the Hollywood look. The antithesis to the "film look" is called the Soap Opera Effect, where low budget television soap operas back in the pre 2000's were shot in 60fps video. This gave the show a distinctly "behind the scenes" look or as if the viewer was on the set, which ultimately ruined the theatrical look. Much of the displeasure around the Soap Opera Effect revolves around the suspension of disbelief, or how we willingly ignore and sacrifice logic for our own enjoyment of performances and works of art. Our attempts to enter this state of mind are hampered by both 60fps video and/or motion interpolation, and this may stop us from enjoying the films created by the film maker. Which brings me to my next point...

-

Lastly, watch what the film maker intended! Films go through a huge process of shooting, directing, editing, exporting, and delivery to your home, and there is nobody in that film making process using TVs with motion interpolation. If the producers intended a certain frame rate and certain image to play on your screen, why change it? The image displayed is objectively worse for very little benefit. You should honor the artistic decisions made by the producers, and watch the video as they intended.

With this in mind, there are a few Good Things that have come out of this shift in video technology. The motion interpolation tools Nvidia created are useful for creative effects, especially for people who have been using motion-interpolation plugins to artificially slow down video. These people already prepare for video shooting at high shutter speeds, and are able to add simulated motion blur after. Any improvements made in these types of tools are a boon for both film and video makers.

A potential benefit to consumers is that TVs now come with high refresh rate displays to complement the motion interpolation feature and may also be able to play high frame rate content. Some may claim that high refresh displays solve pixel blurring (where the actual display is blurry), which is true, but there were few blurring problems with <=30fps videos being displayed on a standard 60Hz display to begin with.

Fortunately, you still have the ability to turn off motion interpolation on most TVs. I recommend turning it off on every TV you own by looking through the TV settings menu and disabling options like Samsung's "Auto Motion Plus" or LG's ironically named "TruMotion".

Unfortunately, I believe motion interpolation is becoming a standard feature on a specific type of movie screening called "UltraAVX" screenings. These are special movie screenings with 3D, better sound, and better seats. I remember the last time I watched an UltraAVX screening there was motion interpolation for the whole movie, although I can't seem to find a credible source to confirm this online. Normal movie screenings continue to play films at the standard 24fps rate, and I really don't miss the 3D experience (or price). Seeing as I have no control over the screenings, I really hope there aren't any attempts to change the standard movie screening experience.

Edit on 2018-12-23: It appears Tom Cruise is also on my side, as evidenced by a recent article criticising motion interpolation